预约演示

更新于:2025-09-09

École Polytechnique Fédérale de Lausanne

更新于:2025-09-09

概览

标签

肿瘤

感染

其他疾病

小分子化药

ADC

CAR-T

疾病领域得分

一眼洞穿机构专注的疾病领域

暂无数据

技术平台

公司药物应用最多的技术

暂无数据

靶点

公司最常开发的靶点

暂无数据

| 排名前五的药物类型 | 数量 |

|---|---|

| 小分子化药 | 7 |

| ADC | 4 |

| 抗体 | 1 |

| CAR-T | 1 |

关联

13

项与 École Polytechnique Fédérale de Lausanne 相关的药物靶点 |

作用机制 DprE1抑制剂 |

非在研适应症- |

最高研发阶段临床2期 |

首次获批国家/地区- |

首次获批日期- |

靶点 |

作用机制 STING 激动剂 |

在研适应症 |

非在研适应症- |

最高研发阶段临床前 |

首次获批国家/地区- |

首次获批日期- |

靶点 |

作用机制 CTSL抑制剂 |

在研适应症 |

非在研适应症- |

最高研发阶段临床前 |

首次获批国家/地区- |

首次获批日期- |

55

项与 École Polytechnique Fédérale de Lausanne 相关的临床试验NCT07139756

Deciphering the Mechanisms of Central Blood Pressure Regulation in Patients With Parkinson Disease Associated With Orthostatic Hypotension: A 2-phase Observational Study With Healthy Participants and Patients With Parkinson's Disease

Phase 1 objective: test the feasibility of using a 3Tesla MRI scanner instead of a 7Tesla MRI scanner to measure brainstem responses to LBNP in healthy participants.

Phase 2 primary objective: compare the brainstem responses to LBNP in patients with PD associated with OH to PD patients without OH using BOLD fMRI

Phase 2 primary objective: compare the brainstem responses to LBNP in patients with PD associated with OH to PD patients without OH using BOLD fMRI

开始日期2025-10-01 |

申办/合作机构 |

NCT06243952

Brain Controlled Spinal Cord Stimulation in Participants with Spinal Cord Injury for Lower Limb Rehabilitation

The purpose of this clinical study is to evaluate the preliminary safety and effectiveness of using a cortical recording device (ECoG) combined with lumbar targeted epidural electrical stimulation (EES) of the spinal cord to restore voluntary motor functions of lower limbs in participants with chronic spinal cord injury suffering from mobility impairment.

The goal is to establish a direct bridge between the motor intention of the participant and the the spinal cord below the lesion, which should not only improve or restore voluntary control of legs movement and support immediate locomotion, but also promote neurological recovery when combined with neurorehabilitation.

The goal is to establish a direct bridge between the motor intention of the participant and the the spinal cord below the lesion, which should not only improve or restore voluntary control of legs movement and support immediate locomotion, but also promote neurological recovery when combined with neurorehabilitation.

开始日期2024-05-03 |

NCT06295614

Study on Preliminary Safety and Efficacy of the ARC-IM Therapy to Alleviate Locomotor Deficits in People With Parkinson's Disease

The purpose of this clinical trial is to assess the preliminary safety and efficacy of the ARC-IM spinal cord stimulation therapy in alleviating locomotor deficits in individuals with Parkinson's disease. The ARC-IM Therapy employs epidural electrical stimulation (EES) to modulate leg muscle recruitment, with the aim of improving mobility deficits. The ultimate goal is to enhance the quality of life of people with Parkinson's disease.

开始日期2024-02-14 |

100 项与 École Polytechnique Fédérale de Lausanne 相关的临床结果

登录后查看更多信息

0 项与 École Polytechnique Fédérale de Lausanne 相关的专利(医药)

登录后查看更多信息

38,978

项与 École Polytechnique Fédérale de Lausanne 相关的文献(医药)2025-12-31·Journal of Natural Fibers

Influence of Growth Location and Extraction Method on the Properties of Cameroonian

Musa acuminata

Leaf Vein Fibers

作者: Michaud, Véronique ; Fokam, Maurane Gaëlle Fokam ; Chengoué, Anatole Mbouyap ; Fokam, Christian Bopda ; Halawani, Nour ; Rougier, Valentin ; Kenmeugne, Bienvenu

Banana plants form an abundant source of agricultural waste, which can be exploited to extract fibers.Whereas the trunk and pseudo-stem are currently exploited, leaf ribs, which are several meters long, could form a valuable source of fibers for use in structural composite materials, if their properties, reproducibility across locations, and extraction methods are well adapted.Thus, leaf ribs of the Grande Naine cultivar of Musa acuminata were collected in Cameroon in High Penja plantation in the Littoral Region, and a rural plantation in the Center Region.Fibers were extracted using water retting, water boiling and caustic soda to assess the role of extraction on properties.The fibers had a d., appearance, chem. composition, and thermal degradation close to those of other banana fibers, an average length over 2 m, and 125-150 μm range diametersWater boiling and soda treatment led to increased tensile properties, in the 15 GPa range for Young′s modulus and 350-400 MPa failure strength.A Weibull statistical anal. of the fiber failure revealed a slight influence of the growth location, and a major influence of the fiber extraction method, with the water boiling method showing a good balance between properties and ease of extraction

2025-12-31·PLATELETS

The renin-angiotensin system in healthy human platelets: expressed but inactive

Article

作者: Béguelin, Charles ; Sanglard, Gabriel ; Molot, Max ; Lu, Philip H. J. ; Panosetti, François ; Zouaghi, Yassine ; Martins Lima, Augusto ; Günçü, Rodi ; Saint Auguste, Damian S. ; Cuenot, François M. ; Magrini, Céline ; Stergiopulos, Nikolaos ; Martins Cavaco, Ana C. ; Nunes, Allancer D. C.

Platelets play a crucial role in arterial thrombus formation, offering potential for new antiplatelet therapies with reduced bleeding risk. Here, we investigated the role of the renin-angiotensin system (RAS) in human platelets and explored its potential link to COVID-19 coagulopathy. Experiments were performed ex vivo on healthy human platelets. The expression of RAS receptors (Mas, MrgD, ACE, ACE2, AT1 and AT2) was evaluated using western blot and immunofluorescence. Platelets were incubated in vitro with either Captopril or different RAS peptides including Alamandine, Angiotensin-I, Angiotensin-II, Angiotensin-(1-7), and Angiotensin-(1-9). Platelet adhesion was measured by spectrophotometry using BCECF fluorescence. Platelet activation and aggregation were analyzed using aggregometry after stimulation with extracellular matrix proteins. ACE and ACE2 activity were assessed using Fluorescent Peptides (FPS). We demonstrated that healthy human platelets express all the tested RAS receptors. However, RAS peptides did not modulate platelet adhesion or aggregation despite a wide range of concentrations tested. ACE activity was detected in platelet lysates, but it was not inhibited by Captopril, while ACE2 activity was undetectable. Our findings suggest that while RAS receptors are expressed in platelets, RAS peptides do not impact platelet function, at least in our experimental setting. COVID-19 coagulopathy may occur independently of the RAS.

2025-11-01·NUCLEAR INSTRUMENTS & METHODS IN PHYSICS RESEARCH SECTION A-ACCELERATORS SPECTROMETERS DETECTORS AND ASSOCIATED EQUIPMENT

Microlens-enhanced SiPMs for the LHCb SciFi tracker Upgrade II: Update and recent results

作者: Ronchetti, F. ; Blanc, F. ; Schneider, O. ; Shchutska, L. ; Marchevski, R. ; Zunica, G. ; Curras-Rivera, E. ; Haefeli, G.

The Scintillating Fiber (SciFi) tracker has been operated in the current LHCb experiment layout since 2022 and will continue to take data until 2030.The high radiation environment damages the detector components and reduces the overall light yield, degrading the hit efficiency.Moreover, LHCb Upgrade II will see the addition of timing information in different sub-detectors, with the need of an adequate amount of detected light to ensure the requested performance.Microlens-enhanced Silicon PhotoMultipliers (SiPMs) allow to improve photon detection efficiency in the SciFi tracker upgrade.Results show an improvement in both Single Photon Time Resolution and SciFi time resolution, when compared to conventional coated SiPMs, due to higher light collection efficiency.

69

项与 École Polytechnique Fédérale de Lausanne 相关的新闻(医药)2025-08-29

·生物世界

撰文丨王聪

编辑丨王多鱼

排版丨水成文

蛋白质-蛋白质相互作用(Protein–protein interaction,PPI)是所有关键生物过程的核心。因此,设计出能够精准结合特定蛋白质的结合蛋白(Binder),是蛋白质设计领域的圣杯。然而,由于决定蛋白质-蛋白质相互作用的结构特征的复杂性,使其设计颇具挑战性,传统方法往往需要耗时数月,成功率却不足 1%。

而现在,Nature 期刊最新发表的一篇论文中提出的 BindCraft 技术彻底颠覆了这一局面——仅需一次计算设计,甚至无需实验优化,即可获得高效结合蛋白,成功率高达 10%-100%!

该研究于 2025 年 8 月 27 日发表于 Nature 期刊,论文题为:One-shot design of functional protein binders with BindCraft。论文作者来自洛桑联邦理工学院和麻省理工学院等机构。

该研究开发了一个名为 BindCraft 的蛋白质从头设计平台,可实现功能性结合蛋白的一次性设计。这是一个开源且自动化的从头设计蛋白质结合剂的流程,其成功率在 10%-100% 之间。BindCraft 利用 AlphaFold2 的权重来生成具有纳摩尔级的高亲和力的蛋白结合剂,无需进行高通量筛选或实验优化,即使在没有已知结合位点的情况下也是如此。

使用 BindCraft,研究团队成功设计出了靶向一系列具有挑战性的靶标的结合剂,包括细胞表面受体、常见过敏原、从头设计的蛋白质以及多结构域核酸酶(例如CRISPR-Cas9)。研究团队通过降低患者来源样本中 IgE 对桦树花粉过敏原的结合、调节 Cas9 基因编辑活性以及降低食源性细菌肠毒素的细胞毒性,展示了这些从头设计的蛋白结合剂的功能和治疗潜力。最后,研究团队利用设计的细胞表面受体特异性结合蛋白来重新定向腺相关病毒(AAV)衣壳,以实现靶向基因递送。这项研究在计算设计方面朝着“one design-one binder”的方法迈出了重要的一步,在治疗学、诊断学和生物技术领域具有巨大的潜力。

BindCraft 的核心突破在于逆向改造蛋白质结构预测 AI 模型——AlphaFold2。传统方法需人工设计数百万候选分子,再进行进一步筛选,而 BindCraft 直接通过反向传播算法,让 AI 从目标蛋白结构“倒推”出匹配的全新结合蛋白。

BindCraft 通过动态建模(靶点与结合蛋白同步优化结构)、智能进化(用神经网络迭代优化蛋白表面氨基酸)、双重过滤(AlphaFold 置信度+物理规则双重质检,淘汰不可靠设计)实现设计效率的显著提升。成功率高达 10%-100%,平均可达 46.3%,设计的结合蛋白的结合强度可达纳摩尔级,相当于抗体药物水平。

为了验证 BindCraft 设计的结合蛋白的实际效果,研究团队对细胞表面受体、常见过敏原、从头设计的蛋白质以及多结构域核酸酶(例如CRISPR-Cas9)进行了一系列设计和测试。

1、设计抗体药物:研究团队针对具有治疗意义的细胞表面受体设计了结合蛋白,包括 PD-1、PD-L1、IFNAR2、CD45 等,无需进行大量设计和筛选,即可找到亲和力达纳摩尔级别的结合蛋白,有望加速抗体药物开发。

2、阻断过敏原:研究团队针对常见的过敏问题进行了研究,针对桦树花粉过敏原 Bet v1 设计的结合蛋白,成功插入了其抗原口袋,患者血清测试显示阻断 50% 过敏抗体结合;针对尘螨过敏原 Der f7 和 Derf21 设计的结合蛋白,精准覆盖了致病表位,晶体结构证实与设计模型误差仅 0.3 纳米。

3、调控 CRISPR 基因编辑:针对基因编辑工具 CRISPR-Cas9 的脱靶风险,研究团队使用 BindCraft 设计了全新抑制蛋白,结果显示,设计蛋白能够精准结合到 Cas9 的 REC1 核酸结合域,细胞实验证实,其显著降低了 CRISPR-Cas9 对 HEK293 细胞的基因编辑活性。

4、中和致命细菌毒素:针对食物中毒病原体产气荚膜梭菌的穿孔毒素 CpE,设计蛋白锁定了细胞表面受体 CLDN1,从而阻断了毒素结合位点。细胞实验证实,其完全消除了毒素导致的细胞死亡,效果等同天然抑制剂。

5、改造 AAV:为了提高 AAV 的基因递送能力,研究团队使用 BindCraft 设计了靶向 HER2 和 PD-L1 的微型结合蛋白,将其整合到 AAV 衣壳,这种改造的 AAV 能够特异性靶向表达这两种蛋白的癌细胞。

总的来说,BindCraft 不仅解决了蛋白质设计领域长期以来的成功率瓶颈,更在过敏治疗、基因编辑安全调控、中和致命毒素、靶向基因治疗等场景给出直接解决方案。此外,研究团队开源了该技术,让普通实验室也能过设计定制蛋白质。这项研究在计算设计方面朝着“one design-one binder”的方法迈出了重要一步,有望重塑药物开发、疾病诊断和治疗以及生物技术领域的未来。

论文链接:

https://www-nature-com.libproxy1.nus.edu.sg/articles/s41586-025-09429-6

设置星标,不错过精彩推文

开放转载

欢迎转发到朋友圈和微信群

微信加群

为促进前沿研究的传播和交流,我们组建了多个专业交流群,长按下方二维码,即可添加小编微信进群,由于申请人数较多,添加微信时请备注:学校/专业/姓名,如果是PI/教授,还请注明。

点在看,传递你的品味

基因疗法

2025-07-30

·生物谷

CAR-T (Chimeric Antigen Receptor T-Cell Immunotherapy),即嵌合抗原受体T细胞免疫疗法。该疗法是一种出现了很多年但近几年才被改良,并使用到临床中的新型细胞疗法。在急性白血病和非霍奇金淋巴瘤的治疗上有着显著的疗效,被认为是最有前景的肿瘤治疗方式之一。正如所有的技术一样,CAR-T技术也经历一个漫长的演化过程,正是在这一系列的演化过程中,CAR-T技术逐渐走向成熟。

这种新的治疗策略的关键之处在于识别靶细胞的被称作嵌合抗原受体(chimeric antigen receptor, CAR)的人工受体,而且在经过基因修饰后,病人T细胞能够表达这种CAR。在人体临床试验中,科学家们通过一种类似透析的过程提取出病人体内的一些T细胞,然后在实验室对它们进行基因修饰,将编码这种CAR的基因导入,这样这些T细胞就能够表达这种新的受体。这些经过基因修饰的T细胞在实验室进行增殖,随后将它们灌注回病人体内。这些T细胞利用它们表达的CAR受体结合到靶细胞表面上的分子,而这种结合触发一种内部信号产生,接着这种内部信号如此强效地激活这些T细胞以至于它们快速地摧毁靶细胞。

近年来,CAR-T免疫疗法除了被用来治疗急性白血病和非霍奇金淋巴瘤之外,经改进后,也被用来治疗实体瘤、自身免疫疾病、HIV感染和心脏病等疾病,具有更广阔的应用空间。基于此,针对CAR-T 细胞疗法取得的最新进展,小编进行一番盘点!

1.免疫疗法新突破!Nature:解锁NK细胞中的一个关键调控因子—CREM,为CAR-NK细胞疗法的发展带来了新的曙光

DOI:10.1038/s41586-025-09087-8

在癌症治疗的前沿领域,免疫疗法正逐渐成为攻克癌症的新希望。近年来,CAR-T细胞疗法在血液肿瘤治疗中取得了显著成效,但其在实体瘤治疗中的应用仍面临诸多挑战。与此同时,自然杀伤细胞(NK细胞)作为一种具有强大抗肿瘤活性的免疫细胞,逐渐受到科研人员的关注。

近日,一篇发表在国际杂志Nature上题为“CREM is a regulatory checkpoint of CAR and IL-15 signalling in NK cells”的研究报告中,来自德克萨斯大学MD安德森癌症研究中心等机构的科学家们通过研究揭示了NK细胞中的一个关键调控因子—CREM,为CAR-NK细胞疗法的发展带来了新的曙光。

尽管CAR-NK细胞疗法在临床前研究中展现出巨大潜力,但目前对于其功能调控的具体分子机制仍知之甚少。这项研究旨在深入探索CAR-NK细胞的分子调控机制,从而提高其抗肿瘤效果。

在本研究中,研究人员主要以人类脐带血来源的NK细胞为研究对象,这些NK细胞经过基因工程改造能表达针对特定肿瘤抗原的CAR(嵌合抗原受体)。研究还涉及多种人类肿瘤细胞系,如Raji(伯基特淋巴瘤)、SKOV3(卵巢癌)和UMRC3(肾癌)等,用于评估CAR-NK细胞的抗肿瘤活性。实验中,研究人员采用了包括单细胞RNA测序(scRNA-seq)、流式细胞术、质谱流式细胞术(CyTOF)、染色质免疫沉淀测序(ChIP-seq)和基因编辑技术(CRISPR-Cas9)等多种前沿技术,从基因表达、蛋白质水平和表观遗传学等多个层面深入分析CREM在NK细胞中的作用。

2.AACR2025:靶向 ICAM-1 的新型CAR-T细胞疗法有望治疗晚期甲状腺癌患者

原文标题:CT206 - ICAM-1 directed chimeric antigen receptor (CAR) T cells (AIC100) in patients with advanced thyroid cancers: Clinical and translational data from the phase 1 dose escalation study

在一项新的1期临床研究中,来自德克萨斯大学 MD 安德森癌症中心的研究人员称,一种名为 AIC100 的新型嵌合抗原受体 (CAR) T 细胞疗法以 ICAM-1 蛋白为靶点,在两种晚期甲状腺癌患者中显示出令人鼓舞的反应和可接受的安全性。

德克萨斯大学 MD 安德森癌症中心干细胞移植与细胞疗法副教授Samer Srour博士在美国癌症研究协会(AACR)2025年年会上首次公布了这项I期临床试验的结果。

甲状腺未分化癌(anaplastic thyroid cancer, ATC)和复发/难治性分化不良甲状腺癌(poorly differentiated thyroid cancer, PDTC)的治疗方案有限且预后不佳,而CAR-T细胞疗法在这两种甲状腺癌的早期治疗中取得了有希望的结果,这表明CAR-T细胞疗法在为实体瘤患者带来益处方面取得了进展。在接受剂量水平2或3治疗的9名患者中,22%的患者观察到肿瘤明显缩小,56%的患者病情得到控制。

Srour说,“在两个剂量组别中观察到的反应令人鼓舞,证明了AIC100治疗这些侵袭性极强的甲状腺癌的潜力。这种癌症是最致命、侵袭性最强的癌症之一,由于目前的治疗方案有限,大多数患者的预后都很糟糕,只有六个月或更短的时间。”

AIC100是第三代CAR-T细胞疗法,通过与肿瘤细胞表面上的ICAM-1结合来消灭它们。这种CAR产品共同表达一种叫做体生长抑素受体2(somatostatin receptor 2)的蛋白质,临床医生可以通过专门的正电子发射断层扫描(PET)来监测治疗效果。

3.NEJM:新临床研究表明利用下一代“装甲”,CAR-T细胞疗法有望更有效治疗淋巴瘤

DOI: 10.1056/NEJMoa2408771

在一项针对B细胞淋巴瘤患者的1期临床研究中,一种下一代“装甲”CAR-T细胞疗法取得了令人鼓舞的结果,这些患者的B细胞淋巴瘤在多轮其他癌症治疗(包括市售的CAR-T细胞疗法)后仍无法治愈。这种新疗法使81%的患者癌症症状减轻,52%的患者病情完全缓解,其中一些最早接受治疗的患者病情得到了两年或更长时间的持久缓解。来自宾夕法尼亚大学佩雷尔曼医学院的研究人员将这项研究发表在NEJM杂志上。

CAR-T细胞疗法是一种个性化的癌症免疫疗法,由宾夕法尼亚大学医学博士Carl June和他的团队首次成功开发,它彻底改变了许多血癌的治疗方法,但在接受现有CAR-T细胞疗法的淋巴瘤患者中,有50%以上的患者不能获得长期缓解。

在美国食品药品管理局(FDA)批准的七种 CAR-T 细胞疗法产品中,有四种用于治疗各种类型的 B 细胞淋巴瘤。对于那些接受 CAR-T 细胞疗法后癌症复发或产生抵抗性的患者来说,预后很差,几乎没有其他选择。以前的研究表明,用现有的 CAR-T 细胞疗法重新治疗这些患者的效果并不好。

宾夕法尼亚大学医学院艾布拉姆森癌症中心血液肿瘤学副教授Jakub Svoboda医学博士说,“我很高兴宾夕法尼亚大学创造的这种新一代 CAR-T 细胞疗法对那些已经尝试过所有治疗淋巴瘤方法的患者非常有效。我们还欣喜地看到,这种新型产品的毒性与我们已经看到的商用 CAR 并无不同。”

除了CAR-T细胞疗法的已知副作用(包括细胞因子释放综合征和神经毒性)外,IL18的添加并没有导致任何新的或意想不到的安全问题,这些副作用都得到了成功控制。研究人员还发现,患者之前接受的CAR-T细胞疗法可能会影响huCART19-IL18的疗效。

4.Blood:新型CAR-T细胞疗法有望更有效治疗难治性 CD30+淋巴瘤患者

DOI: 10.1182/blood.2024026758

霍奇金淋巴瘤和其他CD30+淋巴瘤给医学界带来了巨大挑战,尤其是对于难治或复发病例,传统疗法迄今为止疗效有限。

在一项新的1期临床研究中,来自圣保罗研究所的研究人员与圣保罗医院和何塞普-卡雷拉斯白血病研究所合作开发了一种靶向 CD30 蛋白质(HSP-CAR30)的创新性CAR-T 细胞疗法,该疗法对难治性 CD30+淋巴瘤患者有很好的疗效。具体而言就是,这种新型 CAR-T30 疗法能促进记忆 T 细胞的扩增,从而产生持久的反应,改善患者的临床疗效。相关研究结果发表在Blood杂志上。

最近,CAR-T 细胞疗法已成为治疗血液恶性肿瘤的一种有前途的替代疗法,在 B 细胞白血病和淋巴瘤方面取得了非常积极的成果。然而,由于经过基因修饰的T细胞缺乏持久性,且患者复发率高,CAR-T细胞疗法在 CD30+ 淋巴瘤中的应用受到了限制。此外,这方面的临床试验数量极少,也阻碍了新解决方案的开发。

得益于基因工程和生物技术的进步,研究人员克服了这些挑战,开发出了 HSP-CAR30,这是 CAR-T细胞疗法的优化版本,采用了新的策略来提高治疗性T细胞的功能和持久性。这一突破是抗击此类癌症的一个里程碑,为以前几乎没有治疗选择的患者带来了新的可能性。

这项1期临床试验涉及 10 名复发或难治性典型霍奇金淋巴瘤或 CD30+ T 细胞淋巴瘤患者,取得了非常积极的结果。

圣保罗医院血液科主任Javier Briones博士说,“最引人注目的是 100%的总体反应率,这在接受过多线治疗的患者中极为罕见。此外,50% 的患者获得了完全缓解,这意味着在成像研究和临床分析中无法检测到疾病。”

5.Nat Cancer:年龄相关的NAD水平下降导致CAR-T细胞功能衰竭

DOI: 10.1038/s43018-025-00982-7

随着人类年龄增长,其免疫系统效率逐渐下降,这对依赖免疫细胞调控的癌症疗法构成挑战。在一项发表于Nature Cancer杂志上的新研究中,来自瑞士洛桑大学、洛桑大学医院、日内瓦大学医院和洛桑联邦理工学院的研究团队证明,这种年龄相关的免疫衰退对CAR-T细胞疗法——当前最先进的癌症免疫治疗形式之一,具有可量化的影响。

CAR-T疗法通过对患者T细胞进行基因改造使其识别并摧毁癌细胞。但是这项新研究发现,老年小鼠来源的CAR-T细胞存在线粒体功能受损、“干性(stemness)”降低和抗肿瘤活性减弱。造成这种现象的罪魁祸首是烟酰胺腺嘌呤二核苷酸(NAD)水平下降——NAD对细胞能量代谢和线粒体功能至关重要。

论文第一作者Helen Carrasco Hope博士说道,“老年个体的CAR-T细胞存在代谢缺陷,疗效显著降低。令人兴奋的是,我们通过恢复NAD水平使这些衰老的CAR-T细胞重获新生——在临床前模型中重新激活其抗肿瘤功能。”

她补充道,“这些研究结果强化了学界对‘衰老从根本上重塑免疫细胞功能与代谢’的认知,强调了在临床前研究中精准模拟年龄因素的紧迫性,以便使CAR-T细胞疗法开发更贴近真实癌症人群——其中大部分是老年患者。”

6.Lancet Rheumatol:首次发现CD19 CAR-T细胞治疗自身免疫性疾病时出现局部免疫效应细胞相关毒性综合征

DOI: 10.1016/S2665-9913(25)00091-8

在一项新的研究中,来自埃尔朗根-纽伦堡大学的研究人员发现了一种以前未记录的与靶向CD19的CAR-T细胞治疗自身免疫性疾病相关的器官特异性毒性。这种称为局部免疫效应细胞相关毒性综合征(local immune effector cell-associated toxicity syndrome, LICATS)的现象影响了77%的患者,并且没有持久的并发症。相关研究结果发表在Lancet Rheumatology杂志上。

在这项研究中,研究人员通过观察性分析记录了CD19 CAR-T细胞输注后的器官定向反应。2021年3月至2024年10月期间,共有39名成人和青少年在埃尔朗根和杜塞尔多夫中心接受了第二代CD19 CAR产品MB-CART 19.1或KYV-101治疗,并完成至少30天随访。

临床人员追踪局部症状、实验室指标变化、影像学和活检结果,将事件按1级(自行消退)至4级(需重症监护)分级,并将发病分为早期、中期、晚期。研究人员排除了系统性细胞因子释放综合征(CRS),需要自发消退或短暂使用糖皮质激素来区分LICATS和疾病发作。

共在30名患者中发现54起LICATS事件,最常见表现为皮肤疹(35%)和肾功能障碍(22%)。中位发作时间为输注后10天,持续中位时间11天。所有事件均发生在B细胞发育不全期间,且仅发生在每名患者自身免疫性疾病先前涉及的器官中。

约65%的发作未经治疗即消退,30%对短期糖皮质激素递减方案有效,仅3起事件需要再次住院。3起被归类为3级的事件导致住院时间延长。无患者需要重症监护,所有LICATS事件均消退,且没有持久的并发症。

7.Nat Cancer:利用脂质纳米颗粒递送合成抗原可让CAR-T细胞更有效地识别和消灭实体瘤

DOI: 10.1038/s43018-025-00968-5

在一项新的研究中,来自佐治亚理工学院的研究人员创造出了一种一举两得的方法,它能标记肿瘤细胞,使它们能被患者免疫系统中特别增强的T细胞识别并消灭。这种方法通过教导免疫系统发现它通常会错过的癌症,有朝一日可能会成为治疗某些最难治疗的癌症(如脑癌、乳腺癌和结肠癌)的通用策略。他们的方法在实验室测试中对这些癌症有效,而且不会损害健康组织。重要的是,它还能阻止癌症复发。

在这项新的研究中,佐治亚理工学院生物医学工程系副教授Gabe Kwong及其团队发现,他们可以利用基于脂质纳米颗粒的mRNA递送技术表达称为骆驼单域抗体(camelid single-domain antibody)VHH的合成抗原来标记肿瘤。然后,他们利用 CAR-T 细胞疗法训练机体的免疫系统寻找这种合成抗原,并消灭被标记的肿瘤细胞。

Kwong说,“肿瘤细胞很狡猾。大多数时候,免疫细胞基本上看不到它们,因为它们来自我们自身的组织。通常情况下,如果你要设计靶向癌症的 T 细胞,你需要找出每种不同癌症的特征,才能设计出靶向该癌症的 T 细胞。就这项研究而言,我们设计的 CAR-T 细胞能够识别这种合成抗原,这就成为了一个通用平台。”

8.Cancer Res:靶向PRC2有望让CAR-T细胞更有效治疗血液肿瘤

DOI: 10.1158/0008-5472.CAN-24-1643

目前,半数非霍奇金淋巴瘤和急性淋巴细胞白血病患者对CAR-T细胞的治疗反应不佳。这种疗法包括采集患者自身的防御细胞(T细胞),在实验室中对其进行基因改造,使其能够摧毁肿瘤细胞,然后将其重新输注到相同患者的体内。这些难治性病例在接受常规免疫疗法后通常会复发。为了克服这一问题,巴西研究人员开发出了一种更强大的 CAR-T 细胞。相关研究结果发表在Cancer Research杂志上。

论文第一作者Maria Letícia Rodrigues Carvalho说,“CAR-T细胞免疫疗法具有革命性意义,近年来挽救了许多人的生命。然而,仍有相当一部分患者对这种疗法没有反应。我们在CAR-T细胞上测试了一系列药物,其中一种药物抑制了使得这些细胞对这两种类型的血液肿瘤无效的表观遗传学改变(与基因表达模式有关),从而显示出希望。”

正如这些作者解释的那样,非霍奇金淋巴瘤主要影响中年人,而淋巴细胞白血病患者主要是儿童。这些实验是在肿瘤细胞(体外)和小鼠(体内)上进行的,是未来开展人体临床试验的第一步。

在 CAR-T 细胞上测试的这些药物中,最有潜力的是一种名为 PRC2 的蛋白复合物抑制剂。在健康人体内,这些蛋白是诱导能阻止T细胞行动从而使它们不会攻击健康细胞的基因表达所必需的。

论文通讯作者Tiago da Silva Medina解释说,“然而,就癌症而言,重要的是没有这样的抑制因子来彻底消除肿瘤。虽然CAR-T细胞免疫疗法的基础正是去除这些抑制因子,但仍有一些抑制因子存在。我们所做的就是去除那些阻碍对非霍奇金淋巴瘤和急性淋巴细胞白血病产生更好反应的抑制因子。”

9.CAR-T细胞疗法易引发“脑雾”?Cell:全球首次揭示CAR-T 细胞疗法在治疗癌症时导致轻度认知障碍的细胞机制

DOI: 10.1016/j.cell.2025.03.041

在癌症免疫疗法领域,CAR-T细胞疗法无疑是颗璀璨明珠。它通过基因修饰患者T细胞,使其表达能特异识别癌细胞的嵌合抗原受体(CAR),从而精准摧毁癌细胞。这项技术已成功应用于多种血液癌症治疗,包括急性淋巴细胞白血病、多发性骨髓瘤和特定淋巴瘤类型,并在实体瘤临床试验中展现出巨大潜力。

然而,随着CAR-T细胞疗法广泛应用,一个令人担忧的问题逐渐浮现:患者接受治疗后常报告出现“脑雾”现象,表现为记忆力减退和注意力难以集中。近期,斯坦福大学医学院领导的一项新研究在《Cell》杂志上发表,揭示了CAR-T细胞疗法可能导致的认知障碍问题。

自2017年CAR-T细胞疗法获批用于急性淋巴细胞白血病治疗以来,其在癌症治疗领域的应用不断拓展。但尽管患者报告了认知功能下降现象,相关研究却相对滞后。研究团队负责人、斯坦福大学医学院儿科神经肿瘤学教授Michelle Monje博士强调:“我们需要全面了解CAR-T细胞疗法的长期影响,包括这种新发现的免疫疗法相关认知障碍综合征(IRCI),以便开发相应治疗方法。”

研究团队在小鼠模型上展开广泛实验,测试CAR-T细胞疗法对认知功能的影响。他们构建了多种小鼠模型,包括弥漫性脑桥胶质瘤(DIPG)、急性淋巴细胞白血病(ALL)和骨肉瘤模型,以评估CAR-T细胞疗法对中枢神经系统(CNS)和非CNS癌症的认知影响。通过新型物体识别测试(NORT)和自发交替T迷宫测试(T Maze),研究人员发现,无论癌症是否起源于大脑、扩散到大脑还是完全位于大脑外,接受CAR-T细胞疗法的小鼠均表现出轻度认知障碍,表现为对新物体的反应迟钝和在迷宫中导航能力下降。

研究进一步揭示了认知障碍的潜在机制。实验结果显示,CAR-T细胞疗法后,小胶质细胞(大脑中的免疫细胞)被激活,释放炎症分子(如细胞因子和趋化因子),这些分子对少突胶质细胞(负责生成髓鞘的脑细胞)具有毒性。髓鞘损伤导致神经信号传递效率下降,从而引发认知障碍。研究人员通过流式细胞术、免疫组织化学和电子显微镜技术,分析了小胶质细胞的活性、少突胶质细胞前体细胞(OPCs)和成熟少突胶质细胞的数量变化,以及髓鞘超微结构的改变。他们还检测了脑脊液(CSF)中细胞因子和趋化因子水平,以评估CAR-T细胞疗法后的神经炎症反应。

10.Leukemia:新型CAR-T疗法有望为白血病和淋巴瘤患者带来治疗新希望

doi:10.1038/s41375-025-02573-y

在癌症治疗的战场上,CAR-T细胞疗法如同一位英勇的战士,为那些曾经被认为无药可救的患者带来了新的希望,这种创新的免疫疗法能通过改造患者自身的T细胞使其能精准识别并攻击癌细胞,而且已经在多种血液和淋巴系统癌症的治疗中取得了显著成效。而近日瑞典的科学家们通过一项开创性的研究再次证明了CAR-T细胞疗法在治疗效果和减少副作用方面的巨大潜力。

如今CAR-T细胞疗法在治疗某些血液和淋巴系统癌症方面取得了突破性进展,尤其是在那些传统治疗方法效果不佳的患者身上。瑞典的乌普萨拉大学医院是欧洲首个开展CAR-T细胞疗法临床试验的机构,自2019年以来,瑞典已经有多个治疗中心开始使用这种疗法。

这篇发表在Leukemia杂志上题为“Implementation of standard of care CAR-T-cell treatment for patients with aggressive B-cell lymphoma and acute lymphoblastic leukemia in Sweden”的研究报告中,来自瑞典Skane大学等机构的科学家们汇总了瑞典患者接受CAR-T细胞疗法的治疗结果。

这项研究涵盖了93名患有侵袭性B细胞淋巴瘤(ABCL)的成年患者,其在2019年至2024年间接受了治疗。研究结果显示,66%的患者在接受治疗后30天内实现了完全缓解,即癌症完全消失;在接受治疗一年后,53%的患者没有复发。这一结果不仅令人鼓舞,而且在国际上也具有显著的优势。这项研究的一个特别引人注目的发现是,老年患者(70岁以上)的治疗效果并不比年轻患者差,这在以往的研究中是很少见的,因为老年患者通常被认为在治疗癌症时面临更多的风险和挑战。此外研究人员还发现,接受更高剂量免疫效应细胞相关神经毒性综合征(ICANS)治疗的患者,其无进展生存期(PFS)有所改善,这就表明,ICANS的严重程度可能与治疗效果有关,这一发现值得进一步研究。

11.Nat Cancer:在allo-HCT细胞移植后,靶向HLA-DRB1的CAR-T 细胞或NK细胞有望治疗急性髓性白血病

DOI: 10.1038/s43018-025-00934-1

抗癌疗法的一个主要目标是在不影响周围正常细胞的情况下杀死肿瘤细胞。因此,许多药物都是针对肿瘤特异性抗原设计的,这些抗原是仅由癌细胞表达的分子。然而,在包括急性髓性白血病(AML)在内的某些癌症类型中,很难确定这类特异性抗原。

AML患者通常采用异基因造血干细胞移植(allo-HCT)治疗,即接受捐赠者的造血干细胞移植。不幸的是,尽管allo-HCT取得了进步,但许多AML患者仍会复发。

在一项新的研究中,大阪大学领导的一个多机构研究小组介绍了如何将一种名为HLA-DRB1的分子用作基于嵌合抗原受体(CAR)疗法治疗AML的靶点。相关研究结果发表在Nature Cancer杂志上。

在基于 CAR 的疗法中,T 细胞经过基因改造后靶向和杀死表达特定分子的细胞。CAR-T 细胞在 B 细胞白血病/淋巴瘤和多发性骨髓瘤(MM)患者中取得了巨大成功。然而,目前在针对AML的临床试验中的大多数 CAR-T 细胞靶点在正常细胞类型中也有表达,从而导致潜在的毒性。

论文第一作者Shunya Ikeda说,“在我们之前的MM研究中,我们筛选了单克隆抗体(mAb),以找出能与人类MM样本发生反应但不能与正常血细胞发生反应的抗体。我们的目标是用同样的策略找到AML特异性抗原。”

研究小组开始筛选数千种针对AML细胞的 mAb,最终缩小到 32 种能与AML细胞特异性结合的 mAb。其中一种名为KG2032的mAb在超过50%的患者样本中与AML细胞明确结合。通过测序策略,他们确定 KG2032 与 HLA-DRB1 结合。

论文通讯作者Naoki Hosen 解释说,“有趣的是,我们发现 KG2032 与一个特定的 HLA-DRB1 子集发生了反应,在这个子集中,该蛋白质的第 86 位氨基酸是除天冬氨酸之外的氨基酸。因此,KG2032只会对HLA-DRB1不匹配的个体的AML细胞产生反应,这意味着患者携带这个氨基酸残基,而allo-HCT供者不携带。”(生物谷Bioon.com)

免疫疗法细胞疗法

2025-06-27

自2011年以来,瑞士连续13年全球创新指数排名第一,是全球重要的创新策源地,也是中国首个创新战略伙伴关系国,在生命健康、先进制造、低碳科技等领域与中国具有极佳互补性。BioBAY与Insight Tech共建的S²CUBE(中瑞产业协同创新中心),旨在以生命科学为核心领域,推动瑞士创新技术、创新企业、创新人才及创新生态圈与BioBAY及园区企业的长期交流与合作。S²CUBE将定期发布介绍瑞士生命健康领域的前沿创新项目,促进BioBAY及园区企业与瑞士创新的双边技术交流、双向产业对接和创新合作引进。本期介绍的FluoSphera是2024年《瑞士创新100强》上榜企业,其致力于研发体外药物发现技术。图源FluoSphera瑞士医疗科技公司FluoSphera创立于2021年,该公司开发的体外药物发现技术,能通过在体外构建微生理系统模拟人体生理自然机能,快速准确地分析人体对全身药物输送的反应,提升药物评估准确性,加速药物研发流程,降低药物研发失败风险。FluoSphera为日内瓦大学的衍生公司,由Clelia Bourgoint与Gregory Segala共同创立。Clelia Bourgoint担任公司首席执行官,拥有分子生物学博士学位,在日内瓦大学和马克斯普朗克研究所(德国)针对使用先进显微镜开发全新癌症疗法进行了深入研究。Gregory Segala担任公司首席战略官,拥有药理学博士学位与14年细胞生物学经验,累积发表14篇论文。FluoSphera在瑞士与美国波士顿设有办事处。图源FluoSphera新药的研发成本持续攀升,研发周期需耗费10-15年左右。造成这一现象的原因之一是临床试验的低成功率,由于缺乏临床疗效、产生预期外的临床毒性、药物特性表现不佳等因素,药物临床试验的失败率高达90%;而其中因缺乏临床疗效造成的失败占比为40%-50%,是临床试验失败的主要原因。此外,传统的动物模型(如小鼠)与人类存在较大差异,无法模拟人体系统组织,难以准确反映人体反应,对于抗体-药物偶联物等创新疗法无效,新药研发试验方法有所不足。图源FluoSpheraFluoSphera研发了基于微生理系统(microphysiological systems)的体外药物发现技术,该技术能够更好地评估候选药物对人体的安全性和有效性,降低临床失败风险,减少动物实验,加速药物发现。FluoSphera的体外药物发现技术通过封装、汇集多类人体组织细胞并模拟多个人体器官之间的通信,在体外重现人体的自然生理机能,使研究人员能够在体外测试和研究人体对全身药物输送的反应,快速、准确地对包括小分子、生物制剂和抗体-药物偶联物在内的药物进行临床前评估。FluoSphera的体外药物发现技术首先将不同的人体组织细胞封装进“胶囊”状物体,实现不同组织类型的彼此物理隔离;这些“胶囊”之间可以相互交换可溶性因子,确保如氧气和营养物质的交换与扩散,进而模拟人体器官通过血液循环相互作用的方式,以此模拟人体自然生理机能。同时,FluoSphera给每个“胶囊”分配了独特的颜色和编码,从而能够非侵入性地识别每类组织,而无需对细胞进行标记。药物测试中,FluoSphera会使用复杂的显微镜分析检测生物活性,借助高分辨率成像和自动分析流程读取细胞毒性和组织特异性效应相关读数,快速对药物作用进行可靠的评估。在此过程中,不同器官之间的差异可能导致分析结果产生偏差,FluoSphera的空间定位技术可以追踪单个“胶囊”及其代表的人体组织,全面了解其在治疗前后的药物效应,从而生成无偏数据。在利用成像技术识别药物作用后,FluoSphera还可以根据成像结果对组织进行分选,以进行组学下游分析。FluoSphera的体外药物发现技术能够加快药物开发流程,降低药物研发成本与失败风险。该技术允许单个项目定制,可实现高通量、模块化研发,能够快速评估1000多种化合物并同时进行28次读数,快速准确发现最具临床潜力的候选药物,加速药物研发。同时,该技术能够在早期实现人体全身反应测试,全面了解候选药物的潜在不良反应,有助优化临床前测试,降低持续临床试验成本支出,同时可作为动物实验的新替代方案。目前,FluoSphera已与AbbVie、L'Oréal和Revvity等领先企业建立了合作关系,并将其技术应用范围扩展至抗体-药物偶联物测试领域。2023年3月,FluoSphera完成了100万美元的种子轮前投资,投资者包括IndieBio New York、Mountain Labs和EFI Lake Geneva Ventures。2024年1月,FluoSphera再次获得了瑞士创新署Innosuisse提供的110万瑞士法郎的资金,用于FluoSphera与洛桑联邦理工学院以及日内瓦大学合作开展的、为期两年的新型检测技术开发研究项目。未来,FluoSphera将持续推进体外药物发现技术开发,解决市场尚未满足的医疗需求,加速患者获得更多有效药物的周期。▌文章来源:招商部责编:于嘉敏审核:任旭推荐阅读S²CUBE丨瑞士生物科技公司FimmCyte开发子宫内膜异位症非激素疗法,保护女性健康S²CUBE丨瑞士医疗科技公司Nanolive研发无标记活细胞3D成像技术,加速药物研发S²CUBE丨瑞士生物科技公司CUTISS首创皮肤组织生物工程技术,实现大面积移植用皮肤的个性化定制与自动化生产

高管变更

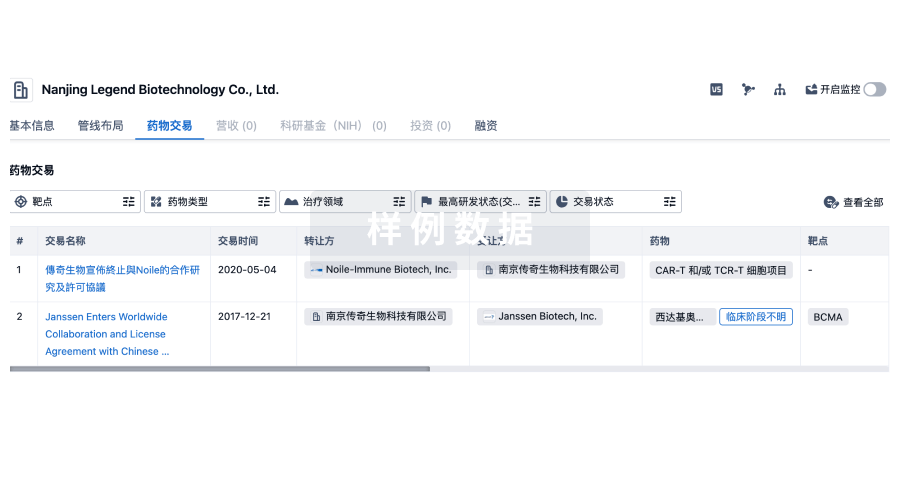

100 项与 École Polytechnique Fédérale de Lausanne 相关的药物交易

登录后查看更多信息

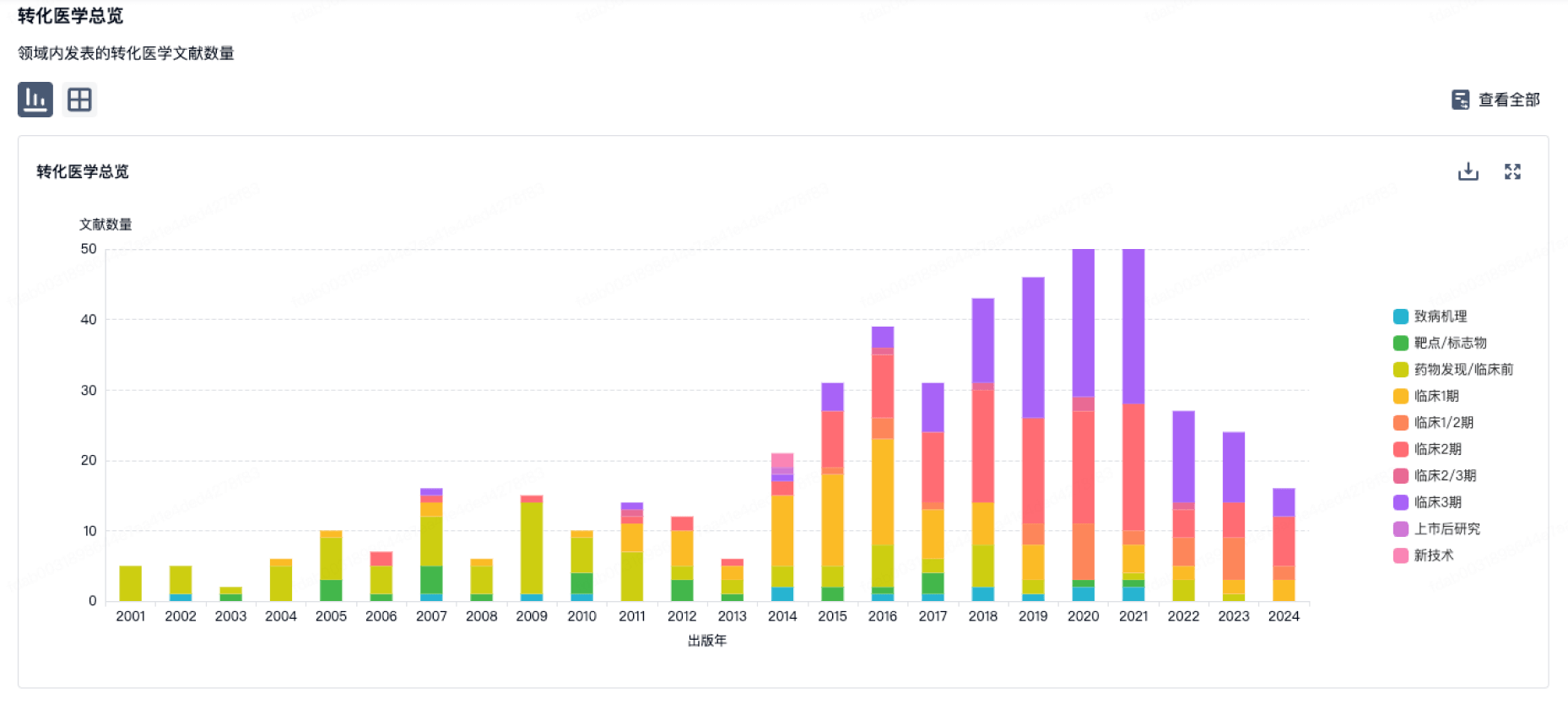

100 项与 École Polytechnique Fédérale de Lausanne 相关的转化医学

登录后查看更多信息

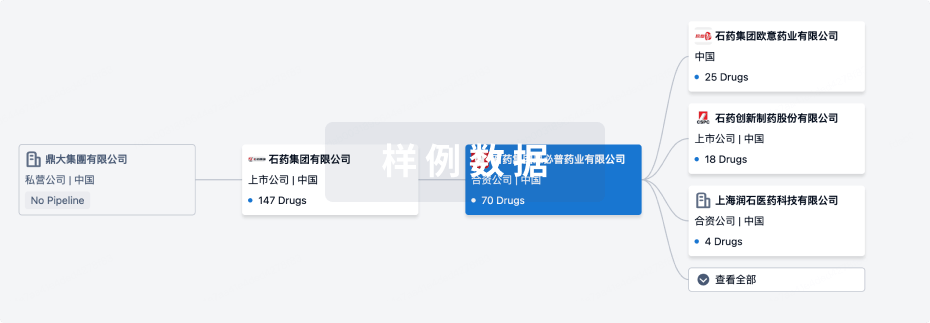

组织架构

使用我们的机构树数据加速您的研究。

登录

或

管线布局

2025年11月01日管线快照

管线布局中药物为当前组织机构及其子机构作为药物机构进行统计,早期临床1期并入临床1期,临床1/2期并入临床2期,临床2/3期并入临床3期

药物发现

3

9

临床前

临床2期

1

4

其他

登录后查看更多信息

药物交易

使用我们的药物交易数据加速您的研究。

登录

或

转化医学

使用我们的转化医学数据加速您的研究。

登录

或

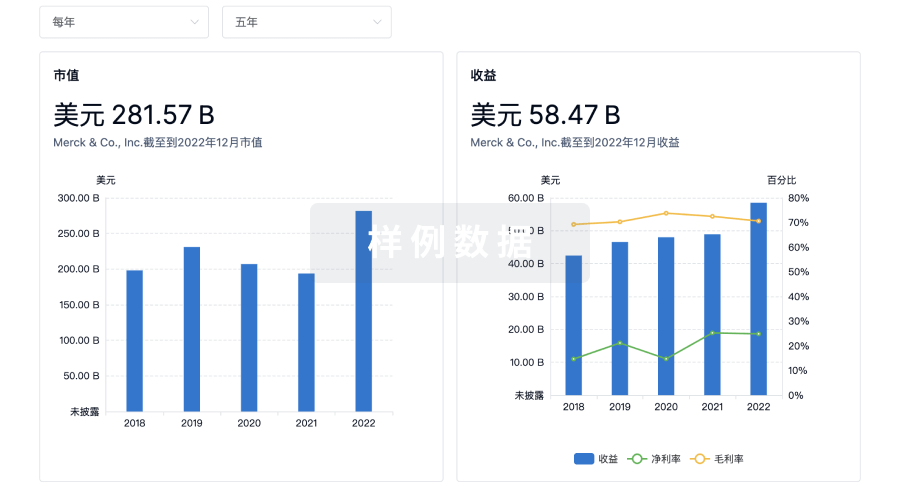

营收

使用 Synapse 探索超过 36 万个组织的财务状况。

登录

或

科研基金(NIH)

访问超过 200 万项资助和基金信息,以提升您的研究之旅。

登录

或

投资

深入了解从初创企业到成熟企业的最新公司投资动态。

登录

或

融资

发掘融资趋势以验证和推进您的投资机会。

登录

或

生物医药百科问答

全新生物医药AI Agent 覆盖科研全链路,让突破性发现快人一步

立即开始免费试用!

智慧芽新药情报库是智慧芽专为生命科学人士构建的基于AI的创新药情报平台,助您全方位提升您的研发与决策效率。

立即开始数据试用!

智慧芽新药库数据也通过智慧芽数据服务平台,以API或者数据包形式对外开放,助您更加充分利用智慧芽新药情报信息。

生物序列数据库

生物药研发创新

免费使用

化学结构数据库

小分子化药研发创新

免费使用